Remember Heartbleed?

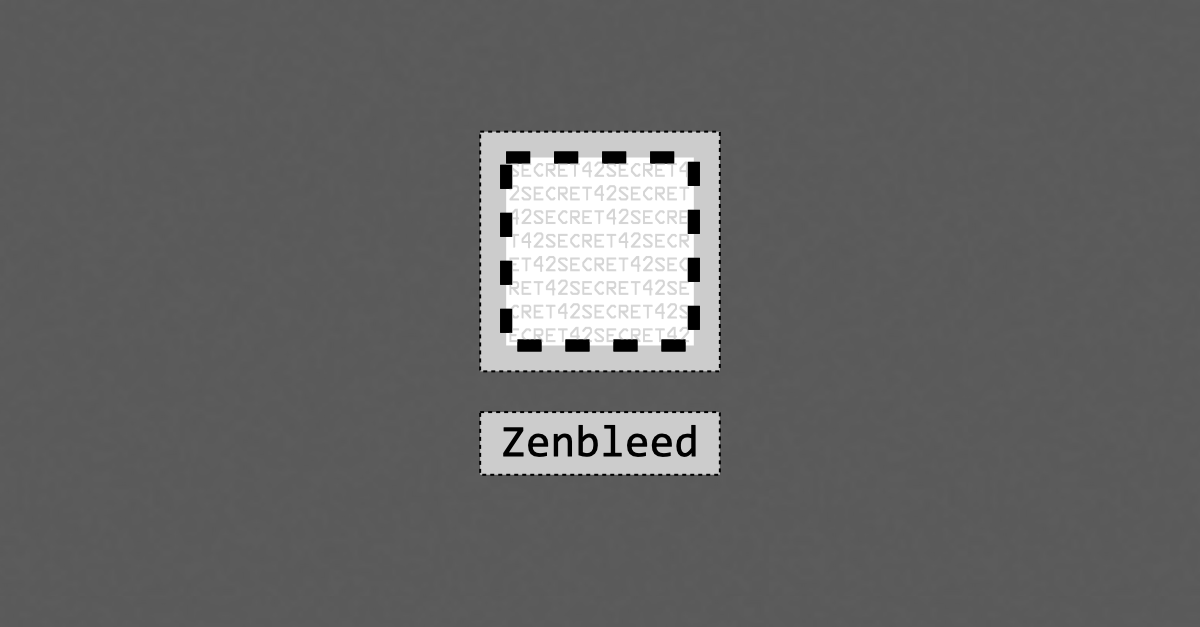

That was the bug, back in 2014, that introduced the suffix -bleed for vulnerabilities that leak data in a haphazard way that neither the attacker nor the victim can reliably control.

In other words, a crook can’t use a bleed-style bug for a precision attack, such as “Find the shadow password file in the /etc directory and upload it to me,” or “Search backwards in memory until the first run of 16 consecutive ASCII digits; that’s a credit card number, so save it for later.”

In Heartbleed, for example, you could trick an unpatched server into sending a message that was supposed to be at most 16 bytes long, but that wrongly included up to about 64,000 additional bytes tacked on the end.

You didn’t get to choose what was in those 64,000 plundered bytes; you just got whatever happened to be adjacent in memory to the genuine message you were supposed to receive.

Sometimes, you’d get chunks of all zeros, or unknown encrypted data for which you didn’t have the decryption key…

…but every now and then you’d get leftover cleartext fragments of a web page that the previous visitor downloaded, or parts of an email that someone else just sent, or even memory blocks with the server’s own private cryptographic keys in it.

Plentiful needles in endless haystacks

Attackers typically exploit bleed-based bugs simply by triggering them over and over again automatically, collecting a giant pile of unauthorised data, and then combing through it later at their leisure.

Needles are surprisingly easy to extract from haystacks if (a) you can automate the search by using software to do the hard work for you, (b) you don’t need answers right away, and (c) you’ve got lots and lots of haystacks, so you can afford miss many or even most of the needles and still end up with a sizeable stash.

Other bleed-named bugs include Rambleed, which deliberately provoked temporary memory errors in order to guess what was stored in nearby parts of a RAM chip, and Optionsbleed, where you could ask a web server over and over again which HTTP options it supported, until it sent you a reply with someone else’s data in it by mistake.

In analogy, a bleed-style bug is a bit like a low-key lottery that doesn’t have any guaranteed mega-jackpot prizes, but where you get a sneaky chance to buy 1,000,000 tickets for the price of one.

Well, famous Google bug-hunter Tavis Ormandy has just reported a new bug of this sort that he’s dubbed Zenbleed, because the bug applies to AMD’s latest Zen 2 range of high-performance processors.

Unfortunately, you can exploit the bug from almost any process or thread on a computer and pseudorandomly bleed out data from almost anywhere in memory.

For example, a program running as an unprivileged user inside a guest virtual machine (VM) that’s supposed to be sealed off from the rest of the system might end up with data from other users in that same VM, or from other VMs on the same computer, or from the host program that is supposed to be controlling the VMs, or even from the kernel of the host operating system itself.

Ormandy was able to create proof-of-concept code that leaked about 30,000 bytes of other people’s data per second per processor core, 16 bytes at a time.

That might not sound like much, but 30KB/sec is sufficient to expose a whopping 3GB over the course of a day, with data that’s accessed more regularly (including passwords, authentication tokens and other data that’s supposed to be kept secret) possibly showing up repeatedly.

And with the data exposed in 16-byte chunks, attackers are likely to find plenty of recognisable fragments in the captured information, helping them to sift and sort the haystacks and focus on the needles.

The price of performance

We’re not going to try to explain the Zenbleed flaw here (please see Tavis Ormandy’s own article for details), but we will focus on the reason why the bug showed up in the first place.

As you’ve probably guessed, given that we’ve already alluded to processes, threads, cores and memory management, this bug is a side-effect of the internal “features” that modern processors pack in to improve performance as much as they can, including a neat but bug-prone trick known in the trade as speculative execution.

Loosely speaking, the idea behind speculative execution is that if a processor core would otherwise be sitting idle, perhaps waiting to find out whether it’s supposed to go down the THEN or the ELSE path of an if-then-else decision in your program, or waiting for a hardware access control check to determine whether it’s really allowed to use the data value that’s stored at a specific memory address or not…

…then it’s worth ploughing on anyway, and calculating ahead (that’s the “speculative execution” part) in case the answer comes in handy.

If the speculative answer turns out to be unnecessary (because it worked out the THEN result when the code went down the ELSE path instead), or ends up off-limits to the current process (in the case of a failed access check), it can simply be discarded.

You can think of speculative execution like a quiz show host who peeks at the answer at the bottom of the card while they’re asking the current question, assuming that the contestant will attempt to answer and they’ll need to refer to the answer straight away.

But in some quiz shows the contestant can say “Pass”, skipping the question with a view to coming back to it later on.

If that happens, the host needs to put the unused answer out of their mind, and plough on with the next question, and the next, and so on.

But if the “passed” question does come round again, how much will the fact that they now know the answer in advance affect how they ask it the second time?

What if they inadvertently read the question differently, or use a different tone of voice that might give the contestant an unintended hint?

After all, the only true way to “forget” something entirely is never to have known it in the first place.

The trouble with vectors

In Ormandy’s Zenbleed bug, now officially known as CVE-2023-20593, the problem arises when an AMD Zen 2 processor performs a special instruction that exists to set multiple so-called vector registers to zero at the same time.

Vector registers are used to store data used by special high-performance numeric and data processing instructions, and in most modern Intel and AMD processors they are a chunky 256 bits wide, unlike the 64 bits of the CPU’s general purpose registers used for traditional programming purposes.

These special vector registers can typically be operated on either 256 bits (32 bytes) at a time, or just 128 bits (16 bytes) at a time.

In fact, for historical reasons, today’s CPUs have two completely different sets of vector-style machine code instructions: a newer bunch known as AVX (advanced vector extensions), which can work with 128 or 256 bits, and an older, less powerful group of instructions called SSE (streaming SIMD extensions, where SIMD in turn stands for single-instruction/mulitple data), which can only work with 128 bits at a time.

Annoyingly, if you run some new-style AVX code, then some old-style SSE code, and then some more AVX code, the SSE instructions in the middle mess up the top 128 bits of the new-fangled 256-bit AVX registers, even though the SSE instructions are, on paper at least, only doing their calculations on the bottom 128 bits.

So the processor quietly saves the top 128 bits of the AVX registers before switching into backwards-compatible SSE mode, and then restores those saved values when you next start using AVX instructions, thus avoiding any unexpected side-effects from mixing old and new vector code.

But this save-and-restore process hurts performance, which both Intel’s and AMD’s programming guides warn you about strongly.

AMD says:

There is a significant penalty for mixing SSE and AVX instructions when the upper 128 bits of the [256-bit-wide] YMM registers contain non-zero data.

Transitioning in either direction will cause a micro-fault to spill or fill the upper 128 bits of all sixteen YMM registers.

There will be an approximately 100 cycle penalty to signal and handle this fault.

And Intel says something similar:

The hardware saves the contents of the upper 128 bits of the [256-bit-wide] YMM registers when transitioning from AVX to SSE, and then restores these values when transitioning back […]

The save and restore operations both cause a penalty that amounts to several tens of clock cycles for each operation.

To save the day, there’s a special vector instruction called VZEROUPPER that zeros out the top 128 bits of each vector register in one go.

By calling VZEROUPPER, even if your own code doesn’t really need it, you signal to the processor that you no longer care about the top 128 bits of those 256-bit registers, so they don’t need saving if an old-school SSE instruction comes along next.

This helps to speed up your code, or at least stops you from slowing down anyone else’s.

And if this sounds like a bit of a kludge…

…well, it is.

It’s a processor-level hack, if you like, just to ensure that you don’t reduce performance by trying to improve it.

Where does CVE-2023-20593 come in?

All of this fixation on performance led Ormandy to his Zenbleed data leakage hole, because:

- AVX code is extremely commonly used for non-mathematical purposes, such as working with text. For example, the popular Linux programming library

glibcuses AVX instructions and registers to speed up the functionstrlen()that’s used to find the length of text strings in C. (Loosely speaking,strlen()using AVX code lets you search through 16 bytes of a string at a time looking for the zero byte that denotes where it ends, instead of using a conventional loop that checks byte-by-byte.) - AMD’s Zen 2 processors don’t reliably undo

VZEROUPPERwhen a speculative execution code path fails. When “unzeroing” the top 128 bits of a 256-vector register because the processor guessed wrongly and theVZEROUPPERoperation needs to be reversed, the register sometimes ends up with 128 bits (16 bytes) “restored” from someone else’s AVX code, instead of the data that was actually there before.

In real life, it seems that programmers rarely use VZEROUPPER in ways that need reversing, or else this bug might have been found years ago, perhaps even during development and testing at AMD itself.

But by experimenting carefully, Ormandy figured out how to craft AVX code loops that not only repeatedly triggered the speculative execution of a VZEROUPPER instruction, but also regularly forced that instruction to be rolled back and the AVX registers “unzeroed”.

Unfortunately, lots of other conventional programs use AVX instructions heavily, even if they’re not the sort of applications such as games, image rendering tools, password crackers or cryptominers that you’d expect to need high-speed vector-style code.

Your operating system, email client, web browser, web server, source code editor, terminal window – pretty much every program you use routinely – almost certainly uses its fair share of AVX code to improve performance.

So, even under very typical conditions, Ormandy sometimes ended up with the ghostly remnants of other programs’ data mixed into his own AVX data, which he could detect and track.

After all, if you know what’s supposed to be in the AVX registers after a VZEROUPPER operation gets rolled back, it’s easy to spot when the values in those registers go awry.

In Ormandy’s own words:

[B]asic operations like

strlen(),memcpy()andstrcmp()[find text string length, copy memory, compare text strings] will use the vector registers – so we can effectively spy on those operations happening anywhere on the system!It doesn’t matter if they’re happening in other virtual machines, sandboxes, containers, processes, whatever.

As we mentioned earlier, if you’ve got a daily pool of 3GB of unstructured, pseudorandomly selected ghost data per CPU core, you might not hit the lottery equivalent of a multi-million-dollar jackpot.

But you’re almost certain to win the equivalent of thousands of $1000 prizes, without riskily poking your nose into other people’s processes and memory pages like traditional “RAM snooping” malware needs to do.

What to do?

CVE-2023-20593 was disclosed responsibly, and AMD has already produced a microcode patch to mitigate the flaw.

If you have a Zen 2 family CPU and you’re concerned about this bug, speak to your motherboard vendor for further information on how to get and apply any relevant fixes.

On operating systems with software tools that support tweaking the so-called MSRs (model-specific registers) in your processor that control its low-level configuration, there’s an undocumented flag (bit 9) you can set in a poorly-documented model register (MSR 0xC0011029) that apparently turns off the behaviour that causes the bug.

MSR 0xC0011029 is referred to in the Linux kernel mailing list archives as the DE_CFG register, apparently short for decode configuration, and other well-known bits in this register are used to regulate other aspects of speculative execution.

We’re therefore guessing that DE_CFG[9], which is shorthand for “bit 9 of MSR 0xC0011029”, decides whether to allow instructions with complex side-effects such as VZEROUPPER to be tried out speculatively at all.

Obviously, if you never allow the processor to zero out the vector registers unless you already know for sure that you’ll never need to “unzero” those registers and back out the changes, this bug can never be triggered.

The fact that this bug wasn’t spotted until now suggests that real-world speculative execution of VZEROUPPER doesn’t happen very often, and thus that this low-level hack/fix is unlikely to have a noticeable impact on performance.

Ormandy’s article includes a description of how to reconfigure the relevant MSR bit in your Zen 2 processor on Linux and FreeBSD.

(You will see DE_CFG[9] described as a chicken bit, jargon for a configuration setting you turn on to turn off a feature that you’re scared of.)

OpenBSD, we hear, will be forcing DE_CFG[9] on automatically on all Zen 2 processors, thus suppressing this bug by default in search of security over performance; on Linux and other BSDs, you can do it with command line tools (root needed) such as wrmsr and cpucontrol.

Mac users can relax because non-ARM Macs all have Intel chips, as far as we know, rather than AMD ones, and Intel processors are not known to be vulnerable to this particular bug.

Windows users may need to fall back on unofficial kernel driver hacks (avoid these unless you really know what you’re doing, because of the security risks of booting up in “allow any old driver” mode), or to install the official WinDbg debugger, enable local kernel debugging, and use a WinDbg script to tweak the relevant MSR.

(We admit that haven’t tried any of these mitigations, because we don’t have an AMD-based computer handy at the moment; please let us know how you get on if you do!)

Leave a Reply