It’s Pi Day – or World Pi Day, if you prefer, or even Universe Pi Day, given that Pi is a universal constant.

As you may remember from school mathematics, Pi is the cool and amazing ratio you get when you divide the distance around a circle by the distance across it – the number comes out identically for every circle, no matter how big or how small.

Quizzically put, it’s Pi that makes bicycle wheels go round and round smoothly, rather than going kerbump, kerbump on a flat spot or gaflop, gaflop on a bulgy bit.

(In mathematics, you’re supposed to use the Latinny word circumference, meaning carrying around, and the Greeky work diameter, meaning measurement across, but it’s the words around and across that matter.)

The Ancient Greeks really struggled with Pi – indeed, they struggled to the point that it’s almost a satirical joke that we now call the constant after a Greek letter, and even write the lower-case Greek letter π to denote it.

Ancient Greek mathematicians were really smart, but they weren’t very open-minded, so they struggled with what we now call irrational numbers.

To the Greeks, numbers of that sort weren’t just irrational, they were unbelievable, inconceivable, impossible, even sacriligeous, so the Pythagoreans simpy denied the possibility that they might exist.

All numbers, they insisted, could be turned into fractions – in other words, they could be represented as one whole number divided by another, like one third (1/3), three quarters (3/4), or all-but-one in a million (999,999/1,000,000, or exactly 0.999999 in decimal notation).

There was no limit to how complicated a fraction could be, but eventually you should, could, would – MUST, insisted the Greeks – find a fraction to represent any possible number.

Fractions considered useful

In the days before modern computers, fractions that came out close to the value of Pi were very useful, because you could work them into calculations using log tables more easily than using a lengthy number in decimal notation.

For example, if you approximate Pi as 3.1416, that’s the same as writing the fraction 31416/10000.

Now, the volume of a sphere is (4πr3)/3, so you could work out the volume of a sphere of radius 176 metres by hand like this:

176m x 176m x 176m x 4 / 3 x 31,416 / 10,000 = 22,836,399m3 Accurate to 3 parts in a million.

Dividing by 10,000 is easy – just chop off four digits at the end – but that five-digit multiplication by 31416 is a real headache, especially if you decide to do it exactly using long multiplication.

(No video showing above? Watch on YouTube.)

But the fraction 22/7, coming out at approximately 3.1429, is good enough for calculations to within 0.1%.

If you use 22/7 instead of 3.1416, you get an easy multiplication followed by a single-digit division, which is easier overall than a five-digit multiplication:

176m x 176m x 176m x 4 / 3 x 22 / 7 = 22,845,537m3 Accurate to 5 parts in 10,000.

22 = (10+1)×2, so to multiply N by 22 you can just add a zero to N in your head for the multiplication by 10, add the original N back into the result to get 11N, and then double it for 22N.

Another popular fraction for Pi when more accuracy is needed is the easily-memorised 355/113.

This approximation of Pi was widely taught in schools and engineering colleges – despite its simplicity, it comes out at 3.14159292, a number that differs from the decimal expansion of Pi only at the seventh decimal place:

176m x 176m x 176m x 4 / 3 x 355 / 113 = 22,836,347m3 Accurate to 1 part in 10 million.

Fractions always imperfect

No matter how hard you try, you’ll never find a fraction that exactly represents Pi, because there isn’t one.

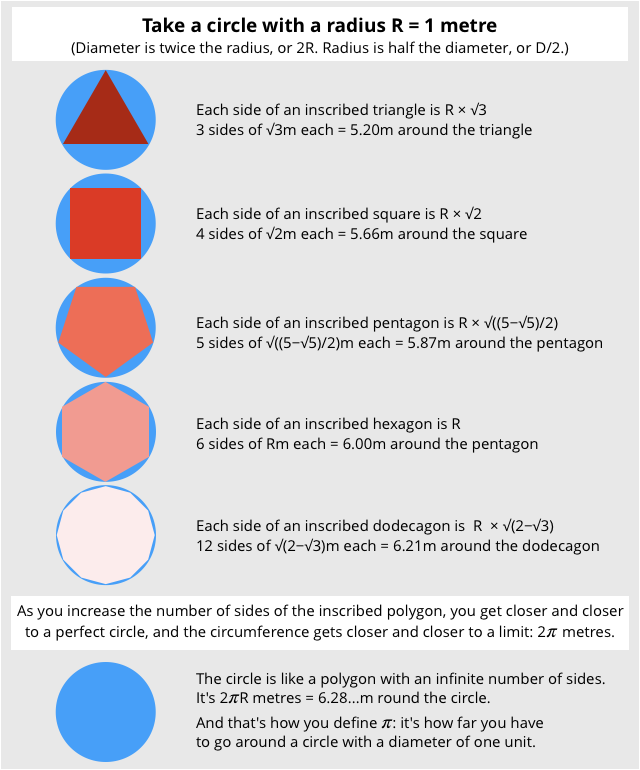

You can get really close, for example by drawing a polygon that fits exactly inside a circle and then increasing the number of sides of the polygon until you can’t even see the tiny segments that are between the polygon and the circle.

You’ll never quite get to the true value of Pi, however, because there will always be some teeny parts at the edge of the true circle that you haven’t covered up.

You can’t get downwards to Pi from the outside of the circle, either.

You can try, by drawing polygons that just skim the outside of the circle, and the distance around your polygon will decrease every time you make it more accurate by adding more sides.

But even though you’re now homing in on Pi from above, you’ll never have a true circle or finish calculating its true circumference.

Simply put: Pi is trivial to describe and even to define, but it’s impossible to write down its value, no matter how many sides your polygon has, how complicated your fractions become, or how many decimal digits you churn out.

This drove the Ancient Greeks crazy – in fact, there’s a legend that says the mathematician Hippasus was deliberately drowned to stop him revealing a dreadful secret he’d proved, namely that the square root of 2, just like Pi, could never be turned into a fraction.

A troubled history is no discouragement

As you can imagine, the troubled history of irrational numbers, and the fruitlessness of trying to calculate them to a conclusion, hasn’t stopped computer scientists from working on Pi.

Indeed, just in time for Pi Day 2019, Emma Haruka Iwao, a software engineer from Seattle in Washington, cranked our a world record 10 Pi trillion decimal digits of the famous number:

BEST. #PiDay. EVER.

— Google for Developers (@googledevs) March 14, 2019

See how Seattle-based, Googler @Yuryu, used Google Cloud to calculate 31.4 trillion digits of Pi for a new world record. This is the first time π has been calculated using the cloud. Take that supercomputers!

Learn more here → https://t.co/LbDJ9C0oyJ pic.twitter.com/0luCUjyxda

Just to be clear, that’s an American trillion, where the tri- refers to the act of multiplying a base number of 1000 by another 1000 three times, for 1000×1000×1000 × 1000, or 1012. In British trillions, now rarely used in the Anglophone world, the tri- means the act of multiplying a base number of 1 by 1,000,000 three times, for 1,000,000 × 1,000,000 × 1,000,000, or 1018. The full number in this case was 31,415,926,535,897 decimal digits long.

So, there you have it!

Pi, figured out to way more precision than you could ever need, but still without the perfection you get just by writing the single letter π.

What to do?

There is a cybersecurity story in here – two, in fact.

- Precision can’t always be achieved. So never, ever pretend to be precise when you aren’t. As a programmer, be very careful that you don’t churn out and present answers that give a false sense of security or correctness.

We’ve written before, for example, about mobile phone networks and mapping apps that, when faced with computer geolocation data that is no more precise than “somewhere in the continental USA”, insist on giving GPS co-ordinates that accurately locate the geographic centre of the country.

They recklessly – and dangerously! – turn raw data that says, “We literally have no detail about where to look” into allegedly precise information that insists, “We took an average of sorts, and now, by magic, we know exactly to within 10 metres.”

Apparently, the geocentre of the USA is a farmhouse in Kansas whose residents are understandably tired of being “pinpointed” precisely for crimes they didn’t commit, spam they never sent, orders they never placed, phones they’ve never owned, meetings they never called.

- Write dates sensibly in your logs. Follow the RFC 3339 standard for date and time on the internet. Writing

Month Day, Year, as North Americans do, is a dreadful practice in logs because the format is hard to read and doesn’t sort easily.

We’re stuck with having Pi Day on March 14, as it’s called in North America, using the weird North American data format, because it’s the only way to get 3.14 to look like a date – if 14 were to denote the month, Pi Day could never exist.

However, RFC 3339 urges you to write dates and times consistently, using an unambiguous format that, when sorted alphabetically, automatically ends up in chronological order.

Simply put, if you wanted to denote 4pm on this year’s Pi day in the city of Amsterdam, you’d say something like this:

2019-03-14T15:00:00.000Z

Write the year in the Christian Era, always with all four digits; then the month and day with leading zeros; then a handy T as a separator; then the time using the 24-hour clock, with a consistent number of fractional digits; and finally Z to indicate Zulu time, meaning you’ve got rid of any pesky timezone by converting to Universal Time Co-ordinated (UTC).

Technically, RFC 3339 allows you to include an explicit timezone (e.g -8 or +10) instead of converting to Zulu time, but we urge you always to convert and always to use the Z marker to say so.

When it comes to data and timestamps: say what you mean, and mean what you say.

Happy #PiDay!

Bryan

> Writing Month Day, Year, as North Americans do, is a dreadful practice in logs because the format is hard to read and doesn’t sort easily.

It was sometime in 2004 when I finally recognized this and began my own practice of naming all recurrent or archival files in $(date "+%Y%m%d") format (or when more specificity warranted, $(date "+%Y%m%d-%H%M%S")). Reading them is now second nature, and it drives my coworkers crazy.

:,)

Some recent purposes find me using $(date "+%s"), which no human I’ve met can read efficiently.

That said, I must confess that we Americans can be an incredibly stubborn lot; after fourteen full years of this improved practice I still feel strange saying the date any way other than “March fourteenth, two thousand nineteen.”

Great article as always, Duck.

Bryan

IIRC this is more globally used–and I tried adopting it for a while–but I find it even more disorienting:

Fourteen March, two thousand nineteen.

Paul Ducklin

At least if you write “14 MAR 2019” everything lines up (assuming you using leading zeros, e.g. “04 JUL 1976”) and the numbers can’t be confused because the MMM acts as a separator.

But it doesn’t sort easily!

Bryan

Indeed; it sorts awfully. It was a list of database exports at an old job when this realization hit me. One of those facepalm moments when one questions why the knowledge wasn’t obvious before.

Paul Ducklin

SMTP and many other datestamps were “English prose style” dates for years, and though trivial to generate were a high drama to parse…

…and that’s why RFC 3339 came along. So you were hardly doing anything unusual!

Spryte

Same issue with me.

My boss could not sort dates in his databases and came to me for assistance. I simply told him if he entered dates by Year Month Date (option for time) they could be easily sorted. He argued that was not the correct format for our area. It was then I suggested that since he used a Form to enter data we could use his format in the form but a little code would put the date in the database as Year Month Date and thusly be sort-able. We made an empty copy, added the code and it and tested and re-tested. It worked like a charm. Then we added more code so others could query and report with dates in local format. Phew!!

Paul Ducklin

RFC 3339 wants some extra markup to make it easier for humans, namely dashes in YYYY-MM-DD, colons in HH:MM:SS, and a “T” between the two. Makes it a bit longer but it stands out so clearly and sortably as a date that it’s worth a few bytes IMO.

Andrew King

You seem to have missed the divide by 3 in all your calculations.

The answer for 176 m is 22,836,345.907 metres cubed

Paul Ducklin

Ouch, I did, too! Fixed, thanks.

Anonymous

Unless you don’t live in America and it’s 14/03/2019, which looks nothing like pi.

Paul Ducklin

It’s almost as though you didn’t read the article :-)

Fred

Would 4pm not be 1600 rather than 1500? Sorry to quibble. I do agree about date format – as a nurse workin in Australia I’ve seen orders written by American doctors which could have led to problems.

Paul Ducklin

16:00 in Amsterdam in mid-March is 15:00 Zulu time, because Amsterdam is in the Central European Time (CET) timezone, or UTC+1.

By subtracting the one hour that Amsterdam is ahead of (or, for example, by adding the seven hours that San Francisco is behind) UTC, you make the timestamp of every log item directly comparable and thus directly and easily sortable into the order they happened.

You also neatly get rid of the confusion that is daylight savings – to compare historical timestamps that are saved in “local time” you need a complete list of where daylight savings applied and when, something that has varied unsystematically and confusingly over the years in many countries. By eliminating timezone and DST at the moment you write the log entry you only need DST information for the current year.

Alan

1000×1000×1000 is an American billion, not trillion. The tri prefix is nonsensical in American.

1000

You didn’t read that sidebar correctly – it’s (1000×1000×1000) × 1000.

Although I have only ever lived in Commonwealth countries, all of which drive on the left and put petrol in their cars, and where the word “billion” used to mean a million million, I have come to consider the American system to be more logical, as well as more commonplace.

So I switched my allegiance many years ago, just as I started saying “dayda” instead of “darter” when I meant data.

The mil- in million refers to mille, or 1000. And a million is a thousand thousands. Therefore it’s just as reasonable to say that the bil- in billion implies that *one* of those factors of 1000 appears twice as it is to say that the bil- means that *both* of them appear twice.

In fact, a British billion would more logically be called a bimillion (1,000,000 × 1,000,000, and then a trimillion, and so on.

If “million” is taken to refer inclusively to both of the 1000s in 1000×1000, then you’d surely keep the full word million as the last part of the name for a million million, but if you think of million as a mill-ion, where the mil- part doesn’t refer to both 1000s in the product 1000×1000, but only to one of them, then the Americans have it free and clear.

Thus, million = 1000 increased by one factor of mille.

Billion = 1000 increased by two factors of mille.

N-illion = 1000 increased by a factor of milleN.

Steve Pomfret

Just love reading things like this. Written so anyone can understand. A perfect distraction from Lockdown.

Paul Ducklin

Thanks, glad you liked it!