Kees Cook is a Google techie and security researcher whose interests include the Linux Kernel Self Protection Project.

The idea of “self-protection” doesn’t mean giving up on trying to create secure code in the first place, of course.

It may sound like an irony, but I’m happy to accept that writing secure code requires that you simultaneously write code that is predicated on insecurity.

The law doesn’t require me to wear a helmet to ride my bicycle, but I do. Not because I expect to ride badly and crash, but because of injuries I’ve suffered in the past that would have been completely avoided with a helmet. Unscientifically, I’ve decided that wearing a helmet seems to keep me more actively aware of how certain unexpected types of head injury can happen – glancing blows from ill-placed signage or badly-arranged loads on the back of pickup trucks, for example. It’s as though my daily decision to wear my helmet actually reduces the probability I’ll need it. It makes me more visible in traffic, too. That’s an excellent outcome, as far as I can see.

Cook decided to try to be scientific about historical Linux kernel bugs rather than just going on a “head feeling.”

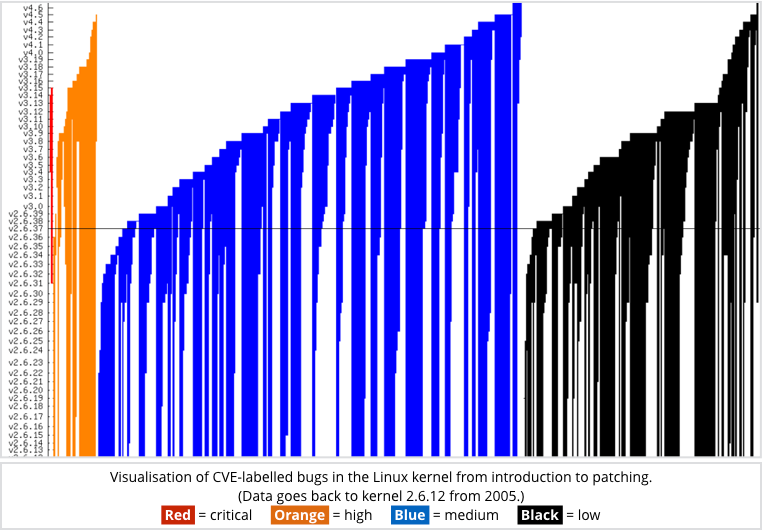

He looked at 557 documented kernel bugs that could be tied to specific CVE-numbered security holes, running from CVE-2008-7316 to CVE-2016-2782.

Because the Linux source code tree and the history of its many changes is a matter of public record, Cook was able to locate source code changes that fixed CVE-labelled bugs, and then trace them back to the corresponding source code changes that introduced the bugs in the first place.

In other words, he could get an objective view of how long bugs tended to hang around after they could theoretically first have been exploited.

The results are intriguing.

Cook detailed them in a chart that shows the different categories of bug and how long they were around for, so the coloured parts in the graph indicate a period of exposure:

Click on image to see Cook’s original.

Fortunately, there were only two bugs designated critical in his survey: CVE-2014-0038 and CVE-2014-0196 (also known as the n_tty or “got root” bug, which is how we referred to it at the time.)

One was around for close to five years; the other for just over two.

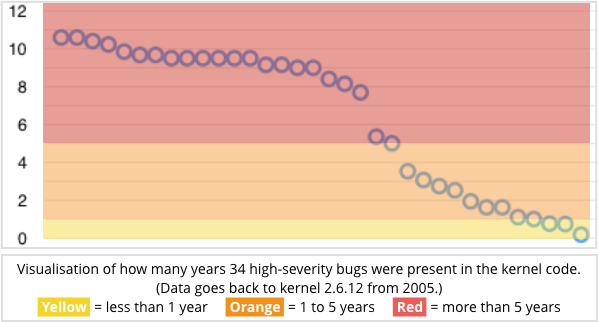

Of 34 bugs deemed to be of high severity, the mean average exposure time was just over six years, with some having been hanging around for more than a decade.

More than 90% of the high-severity bugs (31 out of 34) had been theoretically exploitable for more than a year, as we visualise here:

As Cook rather pointedly writes:

While we’re getting better at fixing bugs, we’re also adding more bugs. And for many devices that have been built on a given kernel version, there haven’t been frequent (or some times any) security updates, so the bug lifetime for those devices is even longer.

That’s the reason he gives for investing time and effort into self-protection projects as well as hunting and purging bugs: we need to improve our collective safety, even in a world where there are so many unapplied patches.

Of course, that’s a call-to-arms that urges us to welcome patches into our ecosystem as quickly as we can. (Internet of Things vendors, please take note!)

There’s a huge difference between “patch available,” which is good in theory, and “patch deployed,” which is good in practice.

As Cook argues, the crooks are keeping a careful watch on on new code that’s added into the Linux kernel, because it’s a great place to find brand new bugs.

Therefore we owe it to ourselves to keep a careful watch for code changes that take old bugs out.

In conclusion, if you will pardon a vehicular analogy: don’t wear a bike helmet to give you an excuse for riding shabbily (or merely because your country’s lawmakers say you have to).

Wear it as part of adopting a safer and more proactive all-round attitude…

Image of ladybirds courtesy of Rulexip via Wikipedia under the Creative Commons Attribution-Share Alike 3.0 Unported licence.

Somewhat Reticent

Why introduce so many bugs, instead of following best practice?

nick5990

Are there examples of how to fix types of bugs? What would an untrained user look for in code for bug-hunting? Suggestions on books, PDFs, videos, etc that are good sources for people to learn from to fix bugs in code?