Generative Adversarial Networks (GANs)[1] are another popular approach in generative modeling, which, much like Variational AutoEncoders (VAEs) aim to model input data distributions in a (often lower-dimensional) latent space. In contrast to VAEs[2], however, the intuition behind GANs is straightforward. In fact, if you’ve played the game of Dictionary, you’re already familiar with the intuition behind GANs.

If you haven’t played Dictionary, the idea is that players are given an obscure word from the dictionary, for which they must each make up a believable—but fake– definition, in an attempt to fool each other into conflating the real and fake definitions. This often leads to fun deceptively plausible sounding definitions like, “Petrichor – noun, a laboratory instrument used for stabilizing containers containing viscous samples during experiments involving vibration or emulsification.”

Image credit: Miliot Science

If you didn’t know, “petrichor” is actually the smell that comes out before a storm which leads people to say, ‘smells like rain.’

In a distilled, boiled down, and salted-to-taste nutshell, Dictionary is about creating fake definitions that sound real. Over several rounds of this, players continuously get better at spotting fakes – “that didn’t quite parse right”, or “the grammar in that definition just sounded a little too awkward!”, and also getting better at producing them. To put it another way and start bridging the analogy gap, Dictionary is a game where players Generate definitions with an Adversarial attitude of trying to fool other players. Each player is basically a “Generative Adversarial Person” (GAP), with an objective of winning the game. Framing this objective as a game-theoretic optimization process, given a definition, players are simultaneously trying to optimize two competing goals: 1) the player generating the definition is trying to maximize the likelihood that the other players label that definition as “real”, while 2) the other players are trying to maximize the number of definitions that they correctly discriminate as real or fake. This is a type of “MinMax” game or “minimax” game in game theoretic parlance.

While Dictionary is simple, the type of minimax game that GANs play is actually even simpler than conventional Dictionary. It is better characterized by a very specific type of dictionary with only two players – one player is always guessing, trying to discriminate real definitions from fake, and the other player is trying to generate plausible definitions. Let x be a true word/definition pair, G(z) be a definition/pair generated by the generating player and D(.) be the answer (0 for “fake”, 1 for “real”) given by the discriminating player. Let E[.] be the expected value of a quantity over many rounds of the game. Then the minimax value function – the function that the generating player wants to minimize and the discriminating player wants to maximize becomes:

V(D,G)=E[D(x)] + E[1-D(G(z))].

Setting aside our Dictionary analogy, in a typical GAN set up, you have two players – a generator and a discriminator, which take the form of neural networks. The generator is optimized to fool the discriminator into “thinking” that samples that it makes up are real, while the discriminator is optimized to label the generator’s made up samples as fake and legitimate samples from the dataset as real. And like two hamsters playing hide-and-seek until their human interrupts to do some cleaning, the two networks optimize more and more against each other, until some intrusive human researcher decides enough is enough.

Image Credit: Delinquent Hamsters, the show you didn’t know you need in your life

To generate data, samples from a latent seed space, Z, are fed to the generator and transformed via the generator’s learnt weights into samples aimed to fool the discriminator. The value function is the same as our proposed dictionary example, where x ϵ X and z~pz(Z). Samples in both data space and seed space are typically drawn uniformly at training and in practice, minibatch stochastic gradient descent or some variation thereof is used as an optimization algorithm.

During data generation, the discriminator network is discarded and samples are generated by seeding the generator network1. Note that the precise quantitative formulation of GAN value functions may vary (cf., [3]), and crucially, unlike in our Dictionary example, the output of the discriminator typically assumes real-valued (or bounded real-valued) quantities, theoretically monotonic with respect to extent of malicious/benign.

Advantages and Disadvantages of GANs

Upon successful training, GANs exhibit high perceptual sample quality compared to other generative models, e.g., VAEs, and in some respects better accommodate discrete data considerations. This is not surprising since plausible appearance is in some sense built into the GAN optimization objective, whereas variational methods, for example, are more related to matching conditional data distributions on aggregate so an average over samples that might not appear realistic to a GAN might have reasonable likelihood under variational assumptions.

However, training GANs is a complicated process. For certain datasets, particularly with high-dimensional samples, GANs may be unstable, and convergence might not occur at all. In other cases, a phenomenon known as “mode collapse” may occur, where the GAN generates only a few different images regardless of the initial seed – i.e., does not provide a valid sampling of the data space. Moreover, samples generated from GANs do not characterize probabilities parametrically, so techniques like Parzen windows must be employed to do so.

While GANs have yielded impressive results for certain data sets, these results do not impart to the viewer the grief that went into appropriate hyperparameter selection to get the GAN to converge at all.

Z-Space Interpolation and Conditional GANs

Intriguingly, provided that GANs converge, their latent seed spaces, or Z-spaces, exhibit quasi-linear semantic properties, similar to those obtained from some types of word embeddings, where, for example, given vector representation R(.) for a given word, R(King) – R(Man) + R (Woman) ≈ R(Queen). GAN Z-spaces, however, exhibit similar properties for a variety of data modalities.

For example, in Fig. 1, we see the results generated by NVIDIA researchers, top-left to bottom-right, by adding/subtracting Z-space vectors according to bottom-right = bottom-left + top-right – top-left, where all images apart from the three corner ones (BL,TR,TL) were generated by seeding with a vector weighting each z-component proportionally to the generated image’s location in the grid. Since the top-left image contains a blond woman, blond and female attributes are attenuated. The bottom-left image contains a blond-haired man and the top-right image contains a brunette woman. The bottom right image, generated from a linear combination of corresponding Z-space vectors, contains a “blond”- and “female”- nullified and “brunette”- amplified mixture of the top-left and bottom-right images, resulting in a brunette male. Other features can be observed in this combination but they are less obvious. While it is difficult to quantify perception of facial attributes, generated images seeded with a linear combination of vectors in Z-space appear to exhibit a quasi-linear combination of facial attributes in input space.

Image Credit: Nvidia Developer

Figure 1: By interpolating in the Z-space obtained from the CelebA dataset according to specific facial attributes, the generator yields gradually morphed yet photo-realistic face images. Only top-left, bottom-left, and top-right images are real. The remainder of the images were obtained by feeding the generator a linear combination of the three images’ Z-space vectors, weighting according to the location in the image. Those weighted vectors corresponding to BL and TR were added, while that corresponding to TL was subtracted. Videos of the celebrity generation/morphing process can be found on YouTube, here and here.

What if we want to generate only certain types of images (or other modalities – e.g., audio, video, text), e.g., faces with particular attributes or clothing of a particular style? Much like VAEs, GANs can also be trivially conditioned [4] on a scalar/vector label of metadata. Let y be the metadata in numeric form. This metadata vector, y, is then used as an input to both generator and discriminator, yielding class-conditional generated data.

Image Credit: Rick and Morty

Other Applications of GANs

GANs have been applied, with varying success to many types of data, and it would be too monotonous for a blog post to enumerate them comprehensively. However, it is worth noting a few other esoteric and interesting applications beyond standard computer vision benchmarks.

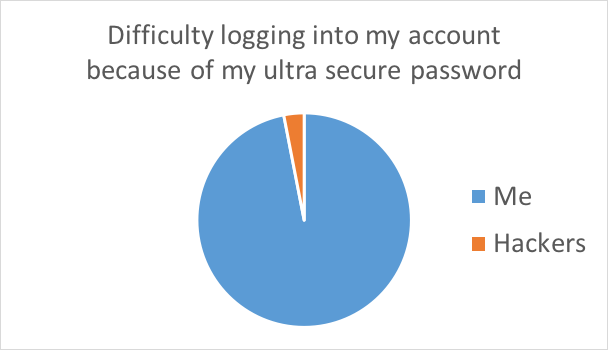

In [5], Hitaj et al. were able to guess 51-73% more passwords from a data leak using a GAN to guess passwords. This strengthens the case for using random passwords substantially, since GANs can be used to learn likely candidates for human-created passwords (perhaps even conditioned on specific types of people).

Another application of GANs that is relevant to security researchers is Steganography – the practice of hiding secret messages and information within transmitted data, typically visual/audio, with the motivation of keeping not only the content of the secret, but also the transmission of the secret, confidential.

If you’re looking for a cool way to send secret messages to your friends, or set up a trail of messages revealing the location of your top secret organization to those who are worthy, well look no further! A group of researchers from the Chinese Academy of Sciences have used GANs to generate images with embedded messages [6]. And if you’re looking to save yourself a trip to mall, in [7] “Be Your Own Prada”, Zhu et al. demonstrated how effective GANs can be at making pictures of people wearing dresses that they’ve never actually worn.

Despite their lifecycle of forgery and deception, GANs actually end up serving a noble cause: They learn the underlying rules of some given concept, like a face or a password, to a sufficiently high degree of fidelity that they then can generate or “imagine” examples of that concept that they’ve never actually seen. Less nobly, you could probably also use a GAN to generate a picture of your dad in a dress if you wanted. But who knows, maybe he’s into that sort of thing.

Image Credit: High Maintenance

1 This is analogous to removing the encoder portion of a VAE.

References

[1] I. Goodfellow et al., “Generative adversarial nets,” in Advances in neural information processing systems, 2014, pp. 2672–2680.

[2] D. P. Kingma and M. Welling, “Auto-encoding variational bayes,” ArXiv Prepr. ArXiv13126114, 2013.

[3] M. Arjovsky, S. Chintala, and L. Bottou, “Wasserstein gan,” ArXiv Prepr. ArXiv170107875, 2017.

[4] M. Mirza and S. Osindero, “Conditional generative adversarial nets,” ArXiv Prepr. ArXiv14111784, 2014.

[5] B. Hitaj, P. Gasti, G. Ateniese, and F. Perez-Cruz, “Passgan: A deep learning approach for password guessing,” ArXiv Prepr. ArXiv170900440, 2017.

[6] H. Shi, J. Dong, W. Wang, Y. Qian, and X. Zhang, “Ssgan: Secure steganography based on generative adversarial networks,” ArXiv Prepr. ArXiv170701613, 2017.

[7] S. Zhu, S. Fidler, R. Urtasun, D. Lin, and C. C. Loy, “Be your own prada: Fashion synthesis with structural coherence,” ArXiv Prepr. ArXiv171007346, 2017.

Leave a Reply